February 15, 2026

Understanding and Addressing Bias in Recruitment

The hiring process represents one of the most critical functions within any organization, yet it remains vulnerable to cognitive biases that can undermine fair evaluation and limit access to the best talent. Bias in recruitment manifests in numerous ways, from initial resume screening through final selection decisions, creating barriers that prevent qualified candidates from advancing while simultaneously limiting organizational diversity and innovation. Understanding these biases and implementing systematic approaches to minimize their impact has become essential for building competitive, inclusive workforces in 2026.

The Nature and Impact of Recruitment Bias

Recruitment bias occurs when hiring decisions are influenced by subjective factors unrelated to job performance or qualifications. These biases operate both consciously and unconsciously, affecting how recruiters and hiring managers evaluate candidates throughout the selection process. Research from Thomas International on unconscious bias demonstrates that even well-intentioned professionals make biased decisions when cognitive shortcuts override objective assessment.

The consequences of bias in recruitment extend far beyond individual hiring mistakes. Organizations that fail to address these issues experience reduced diversity, which correlates with decreased innovation and problem-solving capacity. Studies consistently show that diverse teams outperform homogeneous ones across multiple dimensions, yet biased recruitment practices systematically exclude talented candidates based on characteristics that bear no relationship to job performance.

Financial implications accompany these quality concerns. Poor hiring decisions resulting from bias lead to increased turnover costs, reduced productivity, and potential legal liability. Organizations face reputational damage when bias becomes visible to external stakeholders, affecting their ability to attract top talent in competitive markets.

Common Types of Bias Affecting Hiring Decisions

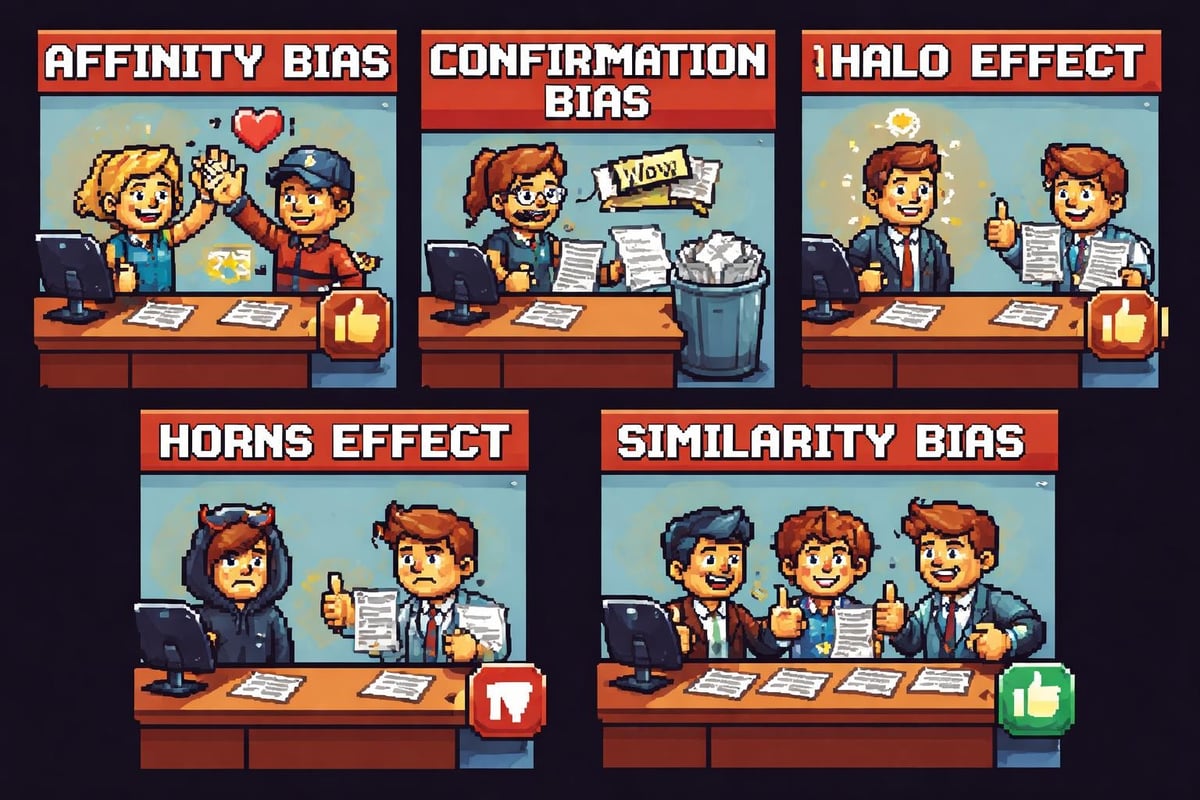

Affinity bias represents one of the most pervasive forms of prejudice in recruitment. This occurs when evaluators favor candidates who share similar backgrounds, interests, or experiences. A hiring manager who attended a prestigious university may unconsciously favor applicants from that institution, regardless of whether that credential predicts job success. This tendency creates self-replicating organizational cultures that exclude different perspectives.

Confirmation bias affects how recruiters process information about candidates. Once an initial impression forms, evaluators seek evidence that supports their hypothesis while discounting contradictory data. A recruiter who develops an early negative impression based on a minor resume detail may focus on weaknesses during the interview while overlooking significant strengths that should override initial concerns.

The halo effect causes evaluators to allow one positive attribute to influence their assessment of unrelated characteristics. A candidate who presents exceptionally well in interviews may receive inflated ratings for technical skills that were not adequately assessed. Conversely, the horns effect works in reverse, where one perceived weakness contaminates the evaluation of otherwise strong qualifications.

Beauty bias and lookism influence hiring decisions despite having no correlation with job performance in most roles. Studies demonstrate that conventionally attractive candidates receive higher ratings and more favorable treatment throughout the recruitment process. This bias intersects with other forms of prejudice, compounding disadvantages for certain demographic groups.

Name bias affects candidates before human reviewers even examine their qualifications. Research consistently shows that identical resumes receive different response rates based solely on the perceived ethnicity or gender of the applicant's name. This barrier prevents talented candidates from advancing past initial screening stages.

Sources of Bias Throughout the Recruitment Process

Resume screening represents the first opportunity for bias to influence hiring outcomes. Traditional manual review processes require evaluators to make rapid judgments about large volumes of applications, creating conditions where cognitive biases flourish. Recruiters spend an average of six to seven seconds on initial resume reviews, insufficient time for careful, objective assessment. During these brief evaluations, factors like university names, employment gaps, or unconventional career paths trigger biased responses that eliminate qualified candidates.

Research on algorithmic bias reveals that technology-based screening tools can perpetuate and amplify existing biases when not properly designed and monitored. AI systems trained on historical hiring data may learn to replicate past discriminatory patterns, creating the illusion of objectivity while systematically disadvantaging certain candidate groups. Organizations implementing AI-powered recruitment tools must carefully evaluate these systems for fairness.

Job descriptions themselves introduce bias before candidates even apply. Gendered language, unnecessary qualification requirements, and culturally specific references create barriers for diverse applicants. Terms like "aggressive," "competitive," and "dominant" attract more male applicants, while phrases like "collaborative" and "supportive" appeal more to female candidates. Requirements for specific educational credentials or years of experience may exclude candidates with alternative pathways to competence.

Interview processes provide multiple opportunities for bias to affect outcomes. Unstructured interviews, where different candidates receive different questions, make objective comparison impossible while maximizing opportunities for subjective preferences to dominate decisions. Interviewer fatigue, ordering effects, and contrast effects further compromise evaluation quality.

The Challenge of Unconscious Bias

Unconscious bias presents particular challenges because evaluators remain unaware of how their judgments are being influenced. These mental shortcuts evolved as efficient information processing mechanisms, allowing rapid decision making in complex environments. However, what served survival purposes in ancestral contexts creates systematic unfairness in modern recruitment.

According to research on unconscious bias in hiring, even individuals who consciously reject prejudice and value diversity demonstrate implicit biases when measured through behavioral assessments. These automatic associations operate faster than conscious reasoning, influencing snap judgments about candidate suitability before deliberate analysis begins.

The implicit nature of unconscious bias means that simply raising awareness provides insufficient protection. Diversity training that focuses solely on explaining bias types often fails to produce behavioral change because knowing about bias does not prevent it from operating. Effective interventions require structural changes to decision-making processes that reduce opportunities for bias to influence outcomes.

Context dependency compounds the challenge of unconscious bias. The same evaluator may demonstrate different bias patterns depending on factors like time pressure, cognitive load, and recent experiences. A recruiter who performs relatively objectively under optimal conditions may exhibit significant bias when rushed, distracted, or fatigued.

Data-Driven Approaches to Identifying Bias

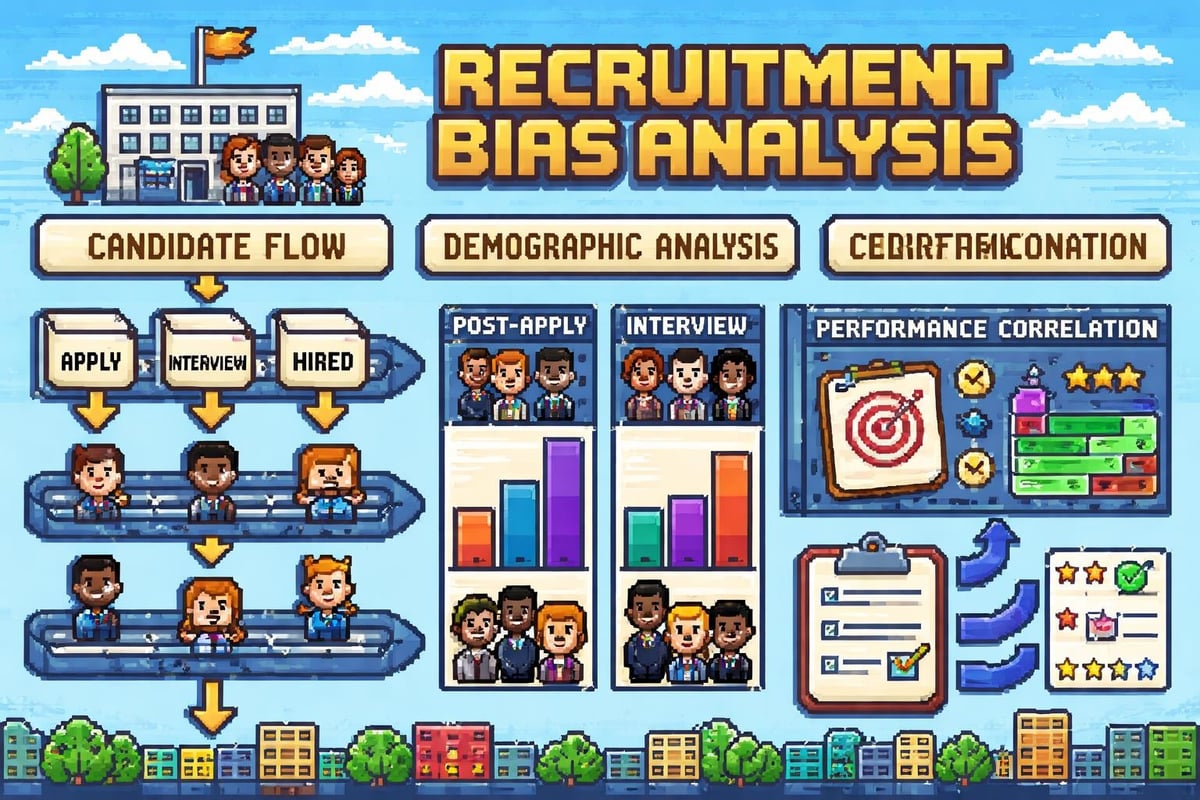

Measuring bias in recruitment requires systematic analysis of hiring data across multiple dimensions. Organizations should track application, interview, and offer rates for different demographic groups at each stage of the recruitment funnel. Significant disparities between qualified applicant pools and final hires signal potential bias requiring investigation.

Adverse impact analysis provides a statistical framework for identifying discriminatory patterns. When selection rates for protected groups fall below four-fifths of the rate for the highest-performing group, this triggers concern under employment law standards. Organizations should conduct these analyses regularly, examining both overall patterns and specific decision points.

Comparative analysis of similar candidates reveals bias operating at individual decision levels. When candidates with equivalent qualifications receive substantially different treatment, evaluator bias represents a likely explanation. This analysis becomes particularly powerful when examining large datasets where patterns emerge across multiple hiring decisions.

Feedback loop analysis examines relationships between hiring decisions and subsequent performance outcomes. When certain candidate characteristics predict selection but not performance, this suggests bias rather than valid assessment. Organizations should correlate hiring criteria with actual job success metrics to validate their evaluation approaches.

Structured Interventions to Reduce Recruitment Bias

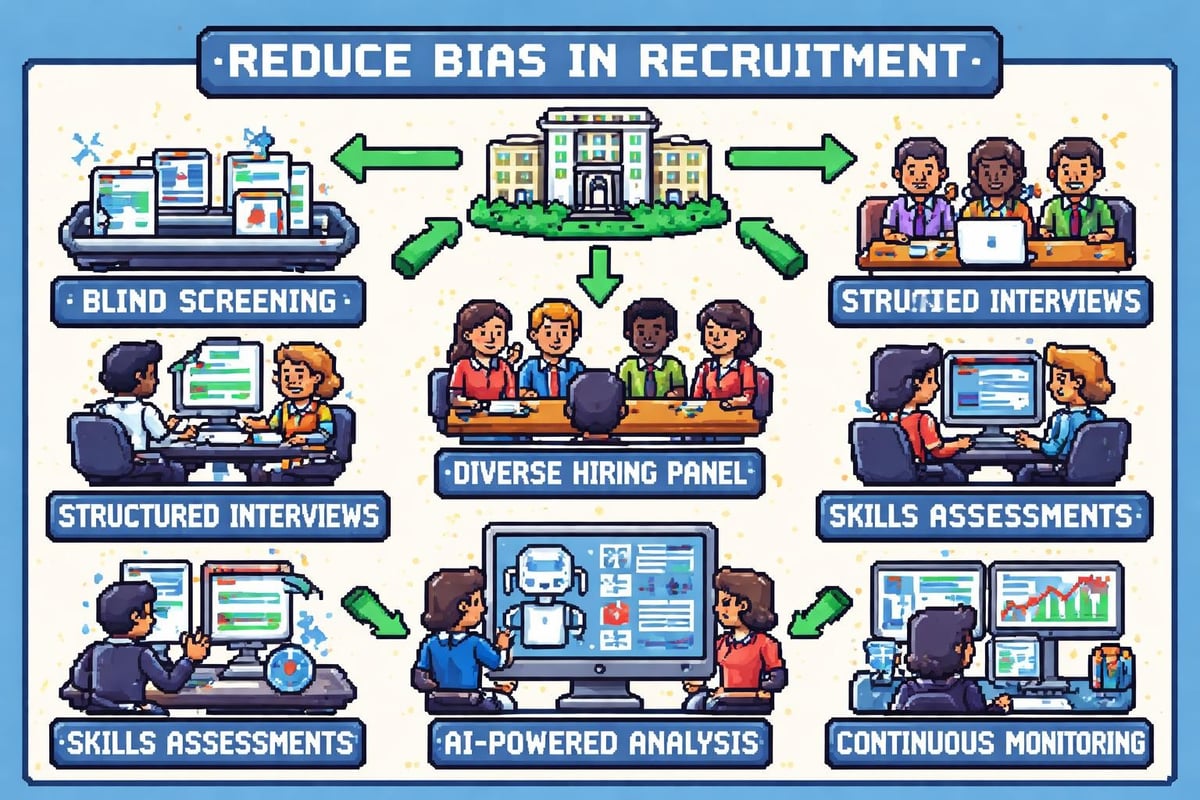

Blind resume screening removes demographic information that triggers unconscious bias. Redacting names, addresses, graduation dates, and other identifying details forces evaluators to focus on job-relevant qualifications. Organizations implementing blind screening typically observe increased diversity in candidate pools advancing to interview stages. Modern CV screening software can automate this process while ensuring consistent application of selection criteria.

Structured interviews standardize the evaluation process by asking all candidates identical questions in the same order. This approach enables genuine comparison while reducing opportunities for bias to influence questioning strategies. Research consistently demonstrates that structured interviews produce more valid predictions of job performance than unstructured conversations.

Competency-based assessment focuses evaluation on specific skills required for job success. By defining clear competencies and creating behavioral questions that probe for evidence of each skill, organizations can shift attention from subjective impressions to concrete demonstrations of capability. Scoring rubrics further enhance objectivity by establishing explicit standards for rating responses.

Panel interviews distribute decision-making power across multiple evaluators with diverse perspectives. This approach reduces the influence of any single person's biases while creating accountability for individual judgments. When panel members independently score candidates before discussing their assessments, this preserves the benefit of multiple viewpoints while preventing groupthink.

Skills-based testing provides objective evidence of candidate capabilities. Work samples, technical assessments, and job simulations measure actual performance on tasks relevant to the role. These methods demonstrate higher validity than resume credentials or interview impressions while creating equitable evaluation opportunities.

Leveraging Technology to Minimize Bias

Artificial intelligence offers promising tools for reducing bias in recruitment when properly designed and deployed. Well-constructed AI systems can evaluate candidates based exclusively on job-relevant criteria without being influenced by demographic characteristics. However, organizations must carefully monitor these systems to ensure they do not perpetuate historical biases embedded in training data.

Studies examining bias in AI-driven job-resume matching reveal that large language models can exhibit systematic preferences related to gender, race, and educational background unless specifically designed to mitigate these biases. Organizations implementing AI recruitment tools should demand transparency about how algorithms make decisions and regular auditing for fairness across demographic groups.

Automated screening tools can apply consistent evaluation criteria across all candidates, eliminating the variability that enables bias in human review. These systems evaluate qualifications based on predefined requirements without fatigue or distraction. However, the criteria themselves must be carefully designed to avoid excluding qualified candidates through arbitrary requirements.

Predictive analytics can identify which candidate characteristics actually correlate with job success in specific organizational contexts. This evidence-based approach replaces assumptions and stereotypes with data-driven insights. Organizations can continuously refine their selection criteria based on performance outcomes, improving both fairness and effectiveness.

Natural language processing tools can identify biased language in job descriptions and other recruitment materials. These systems flag gendered terms, culturally specific references, and potentially exclusionary phrasing, enabling organizations to craft more inclusive communications that attract diverse applicant pools.

Building Accountability and Continuous Improvement

Establishing clear diversity goals creates accountability for reducing bias in recruitment. Organizations should set specific, measurable targets for demographic representation at each hiring stage and hold decision-makers responsible for progress. These goals should reflect both the available talent pool and the organization's commitment to inclusion.

Regular bias audits examine recruitment processes for fairness and effectiveness. These reviews should analyze quantitative hiring data, assess the validity of selection criteria, and gather qualitative feedback from candidates and evaluators. Findings should drive concrete process improvements rather than serving merely as documentation exercises.

Ongoing training helps recruitment professionals recognize and counteract bias. Unlike one-time awareness sessions, sustained development programs provide regular reinforcement of fair evaluation principles. Training should combine bias education with practical skill-building in structured assessment techniques and objective decision-making.

Feedback mechanisms enable continuous learning about bias in recruitment processes. Organizations should solicit input from candidates about their experiences, particularly those from underrepresented groups. This information reveals how processes feel to those affected by bias, often identifying issues that internal data alone cannot detect.

Transparency about hiring processes builds trust and creates external accountability. Organizations that publicly share diversity data and bias reduction efforts face greater pressure to follow through on commitments. This transparency also attracts candidates who value inclusive workplaces.

Legal and Ethical Considerations

Employment law establishes minimum standards for non-discrimination in hiring. Organizations must comply with regulations prohibiting bias based on protected characteristics including race, gender, age, disability, and religion. Beyond legal compliance, ethical considerations demand fair treatment of all candidates regardless of whether specific protections apply.

Documentation requirements serve both legal and operational purposes. Organizations should maintain records demonstrating that hiring decisions rest on job-relevant criteria applied consistently across candidates. This documentation protects against discrimination claims while supporting quality control efforts.

Reasonable accommodations for candidates with disabilities represent both legal obligations and opportunities to access broader talent pools. Organizations should proactively communicate willingness to modify processes and evaluate how standard procedures might disadvantage certain candidates.

According to research on fairness in algorithmic hiring, legal frameworks continue evolving to address technology-enabled discrimination. Organizations using AI recruitment tools should stay informed about emerging regulations and implement governance structures ensuring algorithmic fairness.

Privacy considerations affect how organizations collect and use candidate information. Limiting data collection to job-relevant factors reduces both legal risk and opportunities for bias. Organizations should establish clear policies about what information recruiters can access and how it may influence decisions.

Organizational Culture and Leadership Commitment

Leadership commitment determines whether bias reduction efforts produce meaningful change. When executives prioritize diversity and inclusion, these values permeate organizational culture and influence behaviors throughout the recruitment process. Conversely, superficial commitments yield minimal results regardless of programs implemented.

Inclusive culture attracts diverse candidates while supporting unbiased evaluation. Organizations known for valuing different perspectives benefit from stronger applicant pools and better retention of diverse hires. This virtuous cycle reinforces itself as increased diversity further enhances reputation.

Recruiting team diversity brings multiple perspectives to candidate evaluation. When hiring panels include members from different backgrounds, this naturally reduces the influence of any single group's biases. Diverse teams also better understand how to attract and assess candidates from various backgrounds.

Incentive structures should reward quality of hire and diversity outcomes rather than speed alone. When recruiters face pressure to fill positions quickly, they may rely on cognitive shortcuts that enable bias. Balanced metrics that consider multiple dimensions of hiring success promote more thoughtful decision-making.

Stories and narratives about inclusive hiring reinforce cultural values. Organizations should celebrate instances where structured processes identified excellent candidates who might have been overlooked under biased evaluation. These examples make abstract principles concrete while demonstrating business benefits.

Candidate Experience and Employer Branding

Bias in recruitment affects organizational reputation among potential applicants. Candidates who experience unfair treatment share these stories, damaging employer brand and reducing application quality. Conversely, fair processes enhance reputation and attract stronger talent.

Communication throughout the recruitment process signals organizational values. Transparent timelines, respectful interactions, and substantive feedback demonstrate commitment to treating candidates well. These practices benefit all applicants while particularly impacting how underrepresented groups perceive the organization.

According to insights on overcoming bias in recruiting, candidate experience begins before applications are submitted. Job descriptions, career site content, and recruitment marketing materials all convey messages about inclusivity. Organizations should audit these materials for bias and ensure they attract diverse applicants.

Alternative application pathways accommodate different candidate strengths. Some qualified individuals struggle with traditional resume formats or interview settings despite possessing excellent job skills. Offering multiple ways to demonstrate capability increases access for diverse talent.

Measuring Success and Demonstrating Impact

Diversity metrics provide basic indicators of progress in reducing bias. Organizations should track demographic representation across hiring stages, comparing outcomes to relevant benchmark populations. Improvements in these metrics suggest successful bias reduction, though numbers alone cannot prove causation.

Quality of hire measurements connect recruitment processes to business outcomes. When bias reduction efforts correlate with improved performance, retention, and engagement among new hires, this demonstrates both fairness and effectiveness. Organizations should analyze whether diverse hires succeed at equal or higher rates than majority group members.

Time to productivity indicates how well the selection process identifies candidates who can contribute quickly. Biased evaluation often focuses on superficial characteristics rather than actual capability, resulting in longer ramps to full performance. Reduced time to productivity suggests more accurate assessment.

Employee satisfaction surveys should examine whether new hires from different backgrounds report similar experiences during recruitment and onboarding. Disparities in satisfaction signal that bias may be affecting candidate treatment even if demographic representation appears adequate. Many organizations now incorporate best practices from leading hiring software to ensure consistent candidate experiences.

Retention analysis reveals whether diverse hires remain with the organization at comparable rates to majority group members. High turnover among underrepresented groups often indicates that recruitment processes did not accurately assess fit or that organizational culture fails to support inclusion beyond hiring.

Future Directions in Bias Reduction

Emerging technologies promise new capabilities for identifying and preventing bias in recruitment. Advanced AI systems may eventually recognize subtle bias patterns that escape human detection while ensuring their own decision-making remains fair. However, these tools require ongoing development and validation to fulfill their potential.

Research from comprehensive studies on fairness in AI recruitment points toward integrated approaches combining technological and human elements. The most effective systems leverage AI strengths in consistent evaluation while preserving human judgment for contextual considerations that machines handle poorly.

Predictive fairness represents an evolving concept that examines whether selection systems produce equitable outcomes over time. Rather than simply ensuring identical treatment during assessment, this approach considers whether hiring decisions lead to similar success rates across demographic groups. Organizations increasingly recognize that identical processes may not produce equitable results when candidates face different barriers.

Cross-industry collaboration enables sharing of best practices and collective problem-solving. Bias in recruitment affects all sectors, and lessons learned in one context often apply broadly. Professional associations and research institutions facilitate knowledge exchange that accelerates progress.

Regulatory developments will likely establish stricter requirements for demonstrating fair hiring practices. Organizations that proactively address bias position themselves advantageously as legal standards evolve. Early adoption of rigorous fairness measures creates competitive advantages in talent acquisition.

Addressing bias in recruitment requires systematic approaches that combine awareness, structural interventions, and continuous improvement. Organizations that successfully minimize bias gain access to broader talent pools while making more accurate hiring decisions. Modern AI-powered recruitment platforms offer powerful tools for standardizing evaluation and reducing subjective judgment. Klearskill helps organizations overcome recruitment bias through AI-driven CV analysis that evaluates candidates based on job-relevant qualifications, delivering ranked shortlists in moments while ensuring consistent, objective assessment across all applicants.